You’ve probably experienced this before: the cohort is finishing up, and every participant needs a polished resume before moving forward. You glance at the inbox and see dozens of drafts stacked up.

Your coaching team is small, already juggling mock interviews, tracking assignments, and handling follow-ups from earlier cohorts.

This is the reality for many workforce programs: the demand for high-quality resumes outpaces staff capacity. Every coach wants to give meaningful feedback, but reviewing every document line by line isn’t realistic — not with five coaches, 150 learners, and a week to show results.

The stakes are real. Inconsistent reviews can leave some participants with subpar resumes, while staff burnout grows behind the scenes. Programs risk delayed completion, frustrated coaches, and messy reporting for funders.

The solution isn’t just more hours or extra hires.

It’s about smarter workflows that lift resume quality without manually editing every file.

In the steps below, we’ll cover practical ways to make that happen — from peer-to-peer reviews to technology-driven tools that automate the basics and deliver clean, funder-ready data.

Why the “Red Pen” Method Fails at Scale

For years, many programs relied on the classic “red pen” approach: coaches opening every resume, editing line by line, and sending drafts back and forth.

But at scale, that process becomes impossible to sustain.

The numbers don’t lie

The numbers are unforgiving:

- 20 minutes per resume × 100 participants = 33+ hours.

- Multiple revision cycles or late submissions push this well beyond a full workweek.

Even if coaches manage to get through the pile, each applies their own standards.

One focuses on grammar, another on formatting, another on content clarity. The result is a patchwork of quality that frustrates both students and funders.

Beyond inefficiency, manual review drives burnout.

Coaches spend hours on repetitive corrections instead of guiding participants through high-value activities like mock interviews or targeted mentoring.

Ripple effects of manual editing

- Staff burnout. Constantly reading and correcting resumes is mentally exhausting. Coaches start to dread inboxes, which can erode morale.

- Inconsistent quality. Each coach has a different style and focus areas. The resulting resumes vary widely in quality — not ideal for students or funders.

- Program delays. Backlogs force compressed feedback cycles or postponed deadlines, impacting mock interviews and downstream placement activities.

- Funder friction. Funders expect completion data. When tracking happens manually, it’s messy and error-prone, and it makes reporting tedious and less reliable.

Over time, this creates a fragile, reactive, and unsustainable system.

Step 1: Implement Peer-to-Peer Review Workshops

The first operational fix is simple, immediate, and low-cost: introduce peer-to-peer reviews.

Why it works

One of the most immediate ways to reduce coach workload is peer-to-peer review. Participants review each other’s resumes using a standardized rubric, covering formatting, clarity, grammar, and relevance.

This approach allows multiple rounds of iteration before a coach sees the document, lifting baseline quality and reducing bottlenecks.

Peer reviews also encourage engagement.

Participants internalize professional standards by seeing different approaches, and accountability to peers increases timely submissions.

By the time the resume reaches a coach, it is typically 70–80% complete, letting staff focus on learners who need more guidance.

How to design effective peer-to-peer workshops

- Use a clear rubric. Include formatting, grammar, clarity, and relevance. Standardization ensures consistency across participants.

- Small groups or pairs. Encourage detailed feedback without overwhelming any single participant.

- Iterative review cycles. Allow at least two rounds of peer feedback to reinforce learning.

- Guided facilitation. Coaches can provide examples or a mini-training on “how to give constructive feedback.”

Real-world benefits

- Coaches focus on students who truly need attention, not every draft.

- Participants internalize professional standards and learn by seeing different approaches.

- Programs reduce backlog and increase the likelihood of completing resumes on time.

Short on staff time? Download our Free Resume Workshop Toolkit.

Get 4 ready-to-use resources to help your job seekers build employer-ready resumes in under an hour, including a presentation deck, peer-review checklist, “Common Resume Mistakes” handout, and our “Mad Libs” bullet point builder.

Step 2: Use Technology to Automate the Baseline

The next layer is technology: automating baseline checks so coaches don’t spend time on formatting, spelling, or standard language issues.

How automated tools help

Tools like Big Interview will help you scale resume reviews by:

- Standardizing formatting and ensuring every resume adheres to your template and style guide.

- Introducing grammar and spelling checks and eliminating basic errors before human review.

- Aligning with your program expectations and ensuring resumes meet funder or employer standards without manual intervention.

- Setting customizable scoring, so programs can set weightings for key sections to match specific cohort goals.

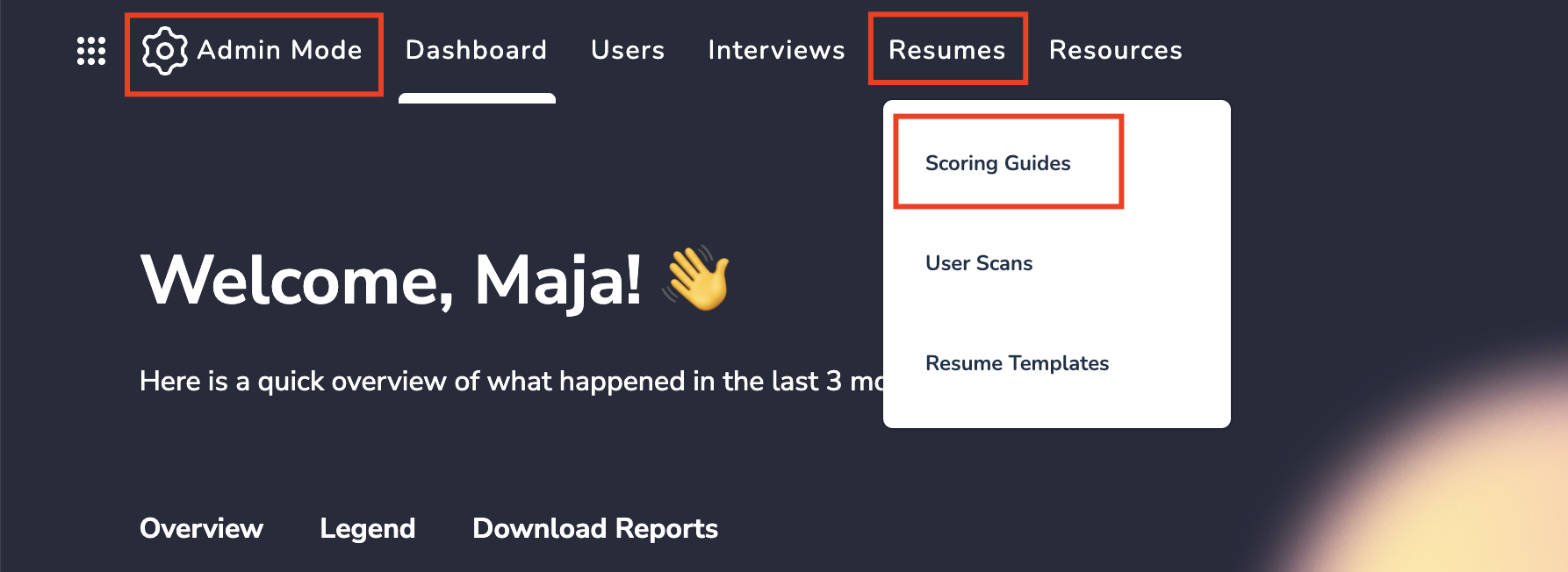

Programs achieve this consistency by creating ResumeAI Scoring Guides inside Big Interview for each cohort.

Instead of reviewing every document manually, program leaders define the standards once — and the system applies them automatically to every resume submitted.

The scoring guide essentially turns your program’s expectations into a shared framework. It defines:

- The sections you want participants to include.

- The level of quality you expect.

- The criteria used to evaluate each resume.

From there, ResumeAI provides instant, standardized feedback to every participant based on those exact criteria.

That means each student is evaluated against the same expectations, eliminating the common problem of inconsistent feedback from different mentors or coaches. Participants know exactly what to improve, and coaches can focus their time only where it’s most needed.

When setting up a scoring guide, programs can tailor the evaluation to match their goals.

For example, you can choose the primary focus of the resume (education, experience, something else) and define a target score participants should aim to reach before their resume is considered “ready.”

You can also customize the importance and options inside evaluation criteria that ResumeAI uses to score each document. These include:

- Readability – clarity, grammar, and overall writing quality.

- Credibility – how well accomplishments and experience are communicated.

- ATS Fit – how likely the resume is to perform well in applicant tracking systems.

- Format – structure, layout, and professional presentation.

This flexibility allows you to align the evaluation with your curriculum.

For example, if your program doesn’t require a summary statement or any other resume section, you can disable it.

If you want participants to receive guidance on something without losing points, you can mark it as feedback-only.

You can also add custom advice in the Best Practices section so users receive coaching tailored to your program’s standards to help them as they’re building their resumes.

Operational advantages

The operational benefit is clear — you get a system where:

- Participants follow clear instructions from the start.

- ResumeAI scales your resume reviews and evaluates every submission using the same, consistent framework.

- Coaches get to spend their time on strategic, high-value guidance like preparing candidates for technical interviews, teaching them storytelling and confidence-building, employer-specific preparation, instead of basic corrections like spelling and formatting.

- Large cohorts can be supported without adding staff.

Programs using automated tools have reported reducing coach editing time by 60–70%, making it feasible to scale even to thousands of learners and freeing staff to focus on high-value coaching and interview prep.

Step 3: Creating Funder-Ready Evidence Without Spreadsheets

Reporting is often the hidden workload behind resume review. Funders and leadership teams need clear evidence that services were delivered, but traditional methods — spreadsheets, manual cross-checks, multiple email addresses — are slow and error-prone.

Digital workflows simplify the process:

- Completion tracking is automatic, showing who submitted, revised, and reached baseline readiness.

- Scoring is standardized, ensuring consistent, auditable data.

- Exportable reports streamline funder reporting and internal dashboards.

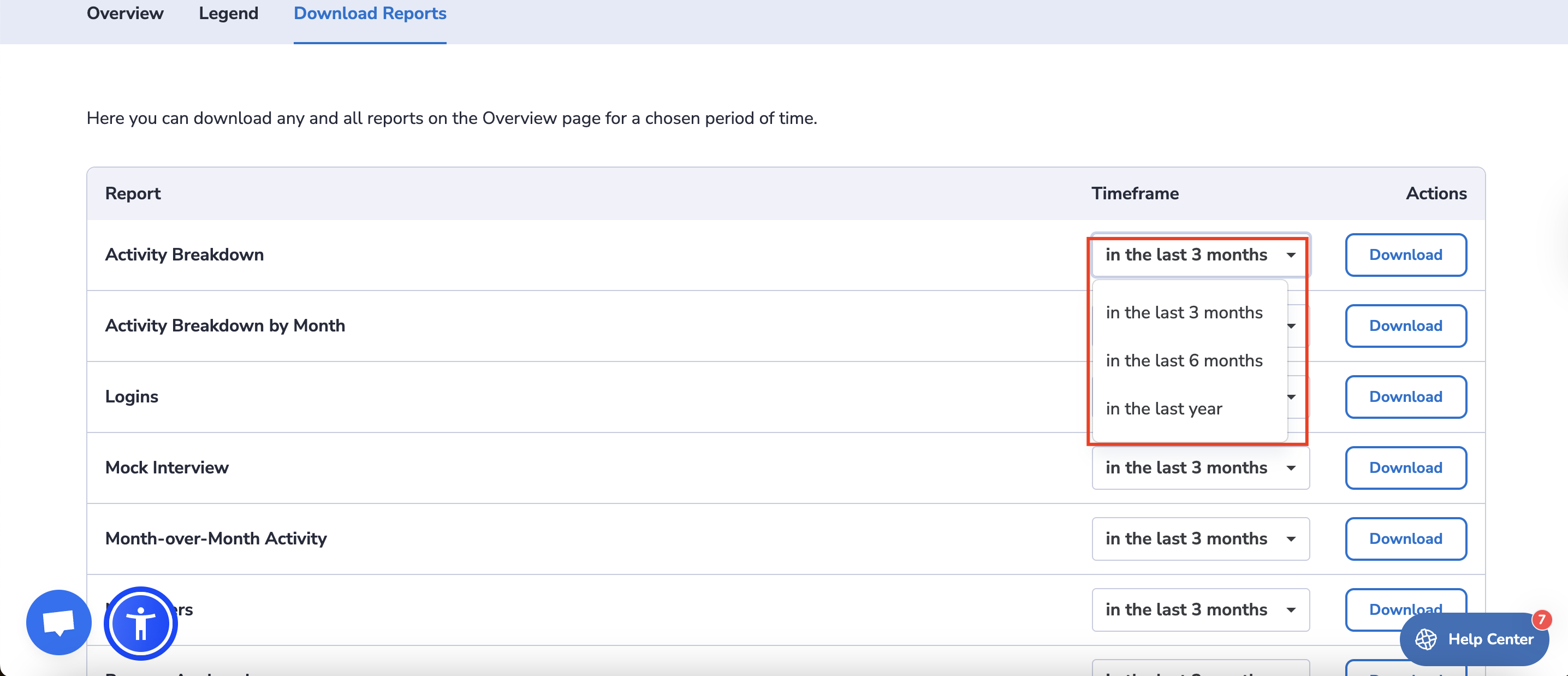

Programs that use Big Interview can generate this kind of reporting directly from the platform, eliminating the need for manual spreadsheets or cross-checking multiple systems.

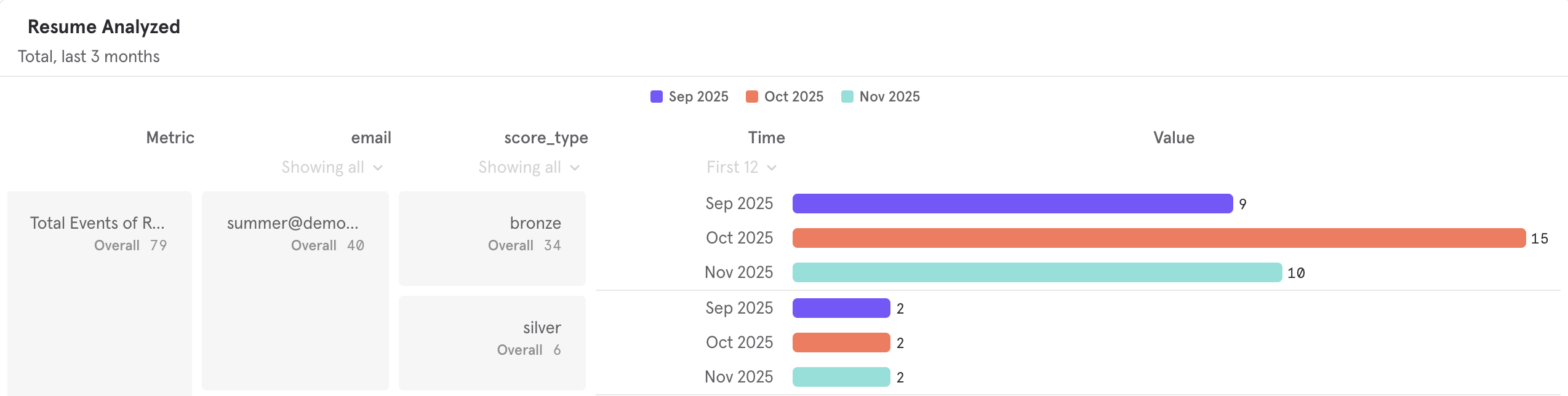

The Admin Dashboard provides built-in analytics that track how participants engage with resume assignments. Because the reporting is integrated into the platform, program leaders can quickly see who is participating and whether they are completing key milestones.

For example, the dashboard provides visibility into metrics such as:

- New users and logins, helping you track overall adoption across cohorts.

- Activity breakdowns, showing how learners are using different parts of the platform.

- Month-over-month engagement trends, making it easier to measure program momentum.

- Resumes analyzed, which indicates how many participants have submitted their resumes for feedback.

For resume-specific reporting, the Resume Analyzed report shows how participants interact with the resume tools — including resumes submitted, feedback accessed, and time spent reviewing their results. This helps program teams understand whether learners are actively improving their resumes or simply submitting once and moving on.

All analytics are refreshed regularly and can be downloaded directly from the dashboard for the last 3, 6, or 12 months, making it easy to generate reports for funders, leadership updates, or internal program evaluation without contacting support or compiling data manually.

The result is a clearer picture of participation and progress across your cohorts — and clean, funder-ready data without the spreadsheet overhead.

Scaling Resume Reviews Without Adding Headcount

By combining peer-to-peer workshops, automated baseline checks, and digital tracking, programs can lift the resume burden from staff while maintaining quality and accountability.

The benefits are tangible:

- Students iterate their own resumes before coach review.

- Coaches focus on high-impact mentoring instead of repetitive editing.

- Program reporting becomes seamless and funder-ready.

- Cohorts of hundreds or thousands can be supported without hiring additional staff.

With this workflow, resume season shifts from a stressful bottleneck to a structured, scalable, and measurable process. Staff are less burned out, students receive consistent, professional documents, and programs can confidently demonstrate impact.

FAQ

How many resumes can a small coaching team realistically review without burning out?

For manual line-by-line review, even five coaches can realistically cover 30–40 resumes per week if each takes 20–25 minutes. Anything beyond that quickly becomes unmanageable and increases the risk of inconsistent quality. That’s why peer review and automation are critical for scaling.

Can peer-to-peer review really maintain quality for funder reporting?

Yes. When combined with a clear rubric and structured cycles, peer review ensures resumes reach a baseline standard before staff intervention. Coaches then focus only on learners who need extra guidance, which keeps overall quality consistent and measurable for funders.

What parts of a resume can automation handle effectively?

Automated tools like Big Resume handle formatting consistency, spelling, grammar, and punctuation, and baseline scoring for quick comparison across participants. Automation isn’t meant to replace coaching, but it removes repetitive tasks, freeing staff for high-value feedback.

Will students participate if they’re reviewing each other’s resumes?

Participation increases when peer review is structured and guided. Clear instructions, a simple rubric, and examples of strong resumes make the process feel meaningful. Students often engage more knowing their peers will see their work and provide actionable feedback.

How do you track completion across multiple cohorts or students with multiple email addresses?

Digital workflows automatically track submission and revision status, even across large cohorts. Participants are identified uniquely in the system, so manual cross-referencing or spreadsheet juggling is unnecessary.

What if a student still needs additional help after peer review and automation?

That’s exactly where coaches focus their energy. By the time resumes reach staff, the baseline quality is already high, so intervention is targeted, efficient, and impactful.

How do these strategies help programs meet funder expectations?

Funders often require evidence that participants completed career readiness tasks. With peer review, automated baseline checks, and digital tracking, programs can generate auditable reports showing participation, revision cycles, and quality scores — all without spending hours on spreadsheets.